This is taken from Statistical Rethinking Chapter 6

import pandas as pd

import torch

import torch.tensor as tensor

import pyro

import pyro.distributions as dist

from pyro.infer import SVI, Trace_ELBO

from pyro.infer.autoguide import AutoDiagonalNormal

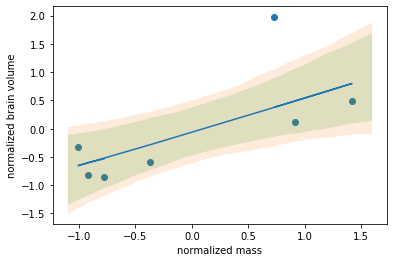

sppnames = ["afarensis", "africanus", "habilis", "boisei", "rudolfensis", "ergaster", "sapiens"]

brainvolcc = [438., 452., 612., 521., 752., 871., 1350.]

masskg = [37.0, 35.5, 34.5, 41.5, 55.5, 61.0, 53.5]

d = pd.DataFrame({"species": sppnames, "brain":brainvolcc, "mass": masskg})

brain = tensor(d['brain'], dtype=torch.float)

mass = tensor(d['mass'], dtype=torch.float)

def modelLM(x, y):

sigma = 1.

weight = pyro.sample("weight", dist.Normal(0., 1.))

y_pred = weight * x

bias = pyro.sample("bias", dist.Normal(x.mean(), 100.))

y_pred = y_pred + bias

y = pyro.sample("y", dist.Normal(y_pred, sigma), obs=y)

return y

adam = pyro.optim.Adam({"lr": 0.03})

guide = AutoDiagonalNormal(modelLM)

svi = SVI(modelLM, guide, adam, loss=Trace_ELBO())

num_iterations = 5000

pyro.clear_param_store()

losses = []

for j in range(num_iterations):

# calculate the loss and take a gradient step

loss = svi.step(mass, brain)

if j % 100 == 0:

print("[iteration %04d] loss: %.4f" % (j + 1, loss / len(brain)))

losses.append(loss)

print(guide.quantiles([0.25, 0.5, 0.75]))

results in

{'bias': [tensor(20.3188), tensor(20.4905), tensor(20.6623)],

'weight': [tensor(15.4664), tensor(15.4902), tensor(15.5140)]}

while the expected bias is:

bias ~ -227.6287

weight ~ 20.6889

The losses are as high as 89155, which suggests there is something wrong in the way I am either definiing the loss or the choice of guide function. Any pointers?