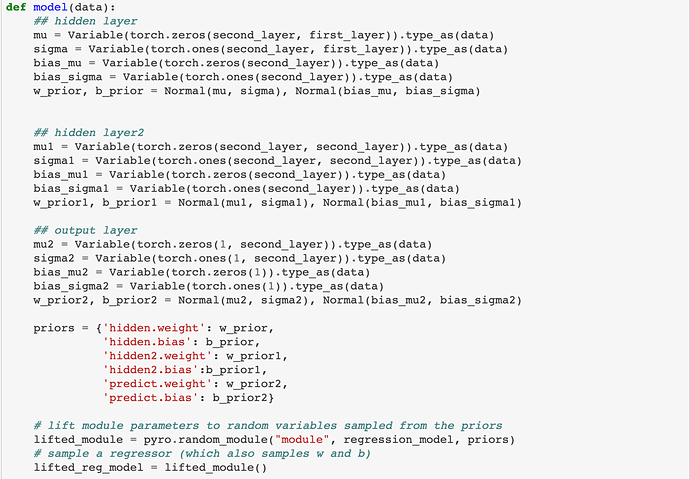

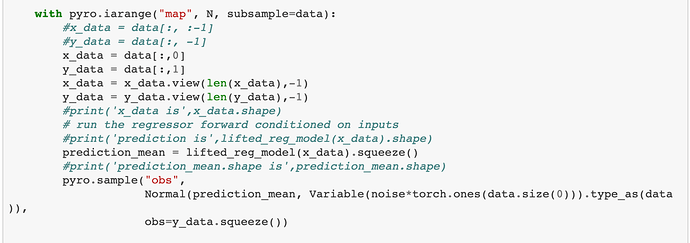

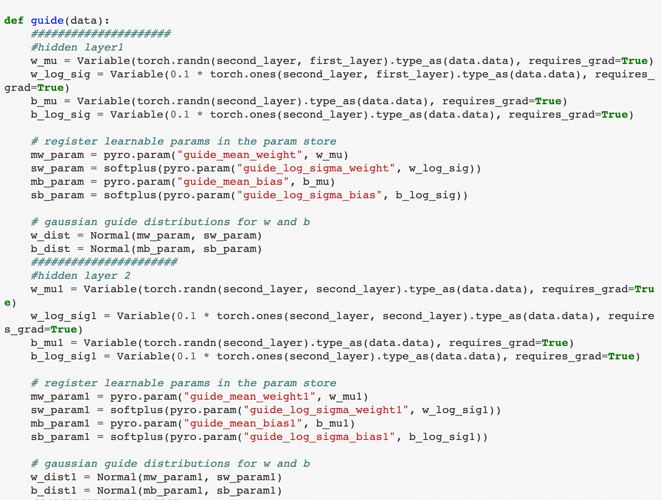

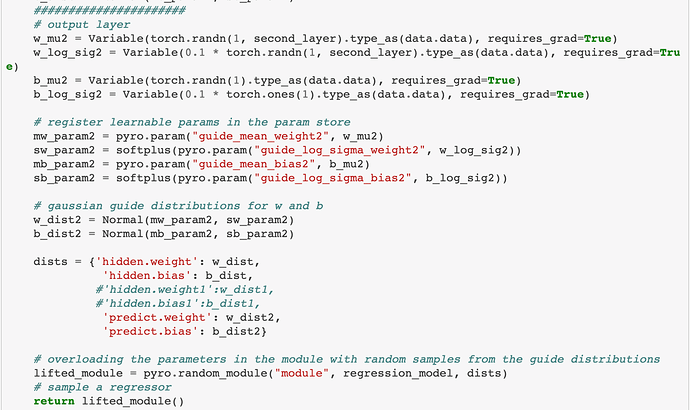

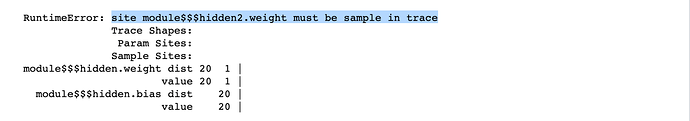

Hi, all. I am a beginner of pyro and wanted to use it for Bayesian inference I started by running a single layer Bayesian net for regression and it works. However, 1 layer is not good enough to fit my dataset and I want to improve the fitting performance by directly extending it to multiple layer neural net. However, the naive approach of adding more parameters doesn’t work. The error message returns “site module$$$hidden2.weight must be sample in trace”. I can’t understand why I cannot do like that and hope I can get help from the experienced users. I will attach the screenshot of my definition of model and guide. Moreover, the detailed code can be found [here].(bayesianNN/Pyro-bayesianNN-exercise.ipynb at master · luningsun/bayesianNN · GitHub)

you’re missing the distributions for hidden2 in your guide. needs to mimic your model.

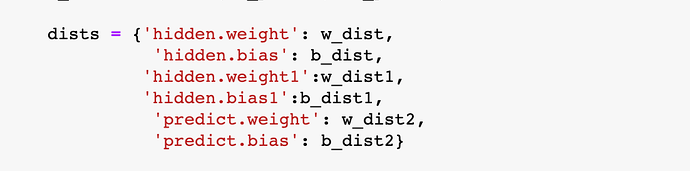

Thank you for your reply. I thought you meant to uncomment the “dists” in the guide. But the same error occurs after I did that.

look at your dists in your model, you need to have the same ones in your guide. namely, you are missing hidden2.weight and hidden2.bias.

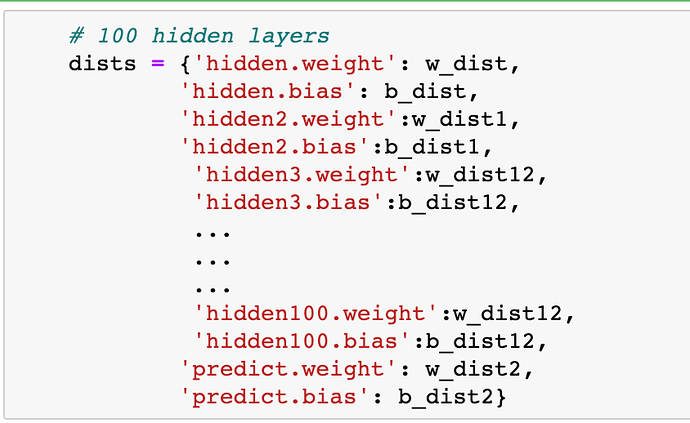

Thank you very much! I never pay attention to that part before and it works now, though the performance didn’t seem to improve with multiple layers. I think I need to spend some time to find the reason. Besides, I come out with another question. If I have 100 hidden layers. Can pyro have some special tricks on defining these hidden layers other than enumerating every layer. I think writing every layer is a very tedious job and is prone to make mistakes like the figures attached.

well you will have to define the distributions somewhere, but you can do this programmatically in python. something like:

locs = [...]

scales = [...]

weight_dists = [Normal(loc[i], scale[i]) for i in range(100)]

dist_dict = {'hidden{}.weight'.format(i): weight_dists[i] for i in range(100)}

edit: if you want to use the same distribution for every layer, you can just pass in the pyro distribution object to random_module() but that’s probably not what you want.

Thank you very much! @jpchen